Volume Snapshots In Stardog

When running a datastore like Stardog it is important to take regular backups. However it is also important to consider the side effects that taking a backup can cause. Administrators have to balance the frequency of backups against the disruption to resources that can be caused creating that backup. If the backup process is CPU, memory, or IO intensive, care must be taken to make sure that it does not interrupt a period of heavy user activity. Further, the resources like disk storage must be balanced against the cost and capacity of disk space.

In this post we discuss the choices that Stardog Cloud made in implementing our backup system and how we leveraged cutting edge features provided by EKS to get the best possible outcome.

Volume Snapshots

Stardog Cloud runs on Amazon Web Services (AWS) Elastic Kubernetes Service (EKS). The Stardog cluster nodes themselves run as stateful sets in Kubernetes (k8s). The stateful sets use the persistent volumes (PVs) and Persistent Volume Claim (PVC) abstraction of k8s for storing data. Ultimately the PVs are backed by AWS’s Elastic Block Store (EBS).

K8s introduced the concept of a VolumeSnapshots in v1.17. VolumeSnapshots allow a user to request that snapshot of a PVC. This will take the contents of the data on the associated PV and begin the process of creating a snapshot of it. Going in the other direction, a PVC can later be made from a VolumeSnapshotContents and used with a k8s stateful set.

While this is a very convenient mechanism to leverage in backing up data it is important to consider the consistency of the data you are backing up. At the time the snapshot is taken it is crucial that the data you wish to backup has been synchronized to disk (not just residing in memory buffers) and that you do not continue writing until the snapshot is in a safe state to do so. The details of this depend on the specific drivers which are backing the k8s storage system. Note from this blog post:

Please note that the Kubernetes Snapshot API does not provide any consistency guarantees. You have to prepare your application (pause application, freeze filesystem etc.) before taking the snapshot for data consistency either manually or using some other higher level APIs/controllers.

Stardog Backups

Stardog has a feature commonly known as server backup. This command will instruct Stardog to take its current state and back it up to a location on the filesystem. Because of the magic of our starrocks index engine this is a fast and consistent process that can be done while the Stardog server is running and with minimal interruption to the resource that the Stardog server needs. When the command returns there is a consistent backup of the Stardog server. While this backup will be overwritten by future backups and thus cannot be used as a point-in-time source for future restoration, it is exactly what we need to safely use a k8s VolumeSnapshot as a backup.

In Stardog Cloud we run a cronjob that first instructs one of the nodes in the Stardog cluster to run a server backup. Once that is complete a VolumeSnapshot is created from the PV where that backup is stored. We later verify that the backup is valid and thus have a consistent backup stored in the k8s system which can be used for restorations at a later time.

The Magic of EBS

When thinking on top of just the k8s abstraction the above is a very straight forward, safe approach and complete backup system. However it is important to go a layer deeper to understand how resources are being used and their potential impact on the running system.

Backup Consistency

While the server backup command is enough to guarantee a consistent backup on the data volume at the time it was taken, we also need to be sure that the backup is not disrupted while it is being stored to the snapshot itself. As an example what if the snapshot process itself took so long that another backup was made on top of the existing one but before it was completed? We must make sure that the second backup does not corrupt the first and that the data remains consistent with its state at the time the backup was taken. Thankfully EBS handles this for us. When k8s creates the VolumeSnapshot it requests an EBS snapshot from AWS. This begins the backup process immediately but allows the user to continue to write data to the volume without disrupting the contents of the snapshot that is being created.

Incremental Storage

Another feature that EBS provides is incremental storage. When a snapshot is taken it is based upon any previous snapshot taken from the same volume and only the difference between the two are stored. Let us consider the case of a large Stardog database that is storing a few billion triples and taking up hundreds of gigabytes of storage. If we wish to take a backup of this datastore once a day and retain the last 30 days worth data we will be using potentially terabytes of storage just for backups. And worse most of that storage will be redundant. However because EBS provides us with incremental storage we will only be storing the small amount of data that has changed since the last backup was taken. This lets us same storage space, snapshot creation time, and network utilization for data transfer from the volume to the S3 where the snapshot is stored.

Conclusion

Stardog Cloud has a robust and efficient backup system that leverages the best practices and solid features of Kubernetes and Amazons Elastic Block Store. Check it out.

Keep Reading:

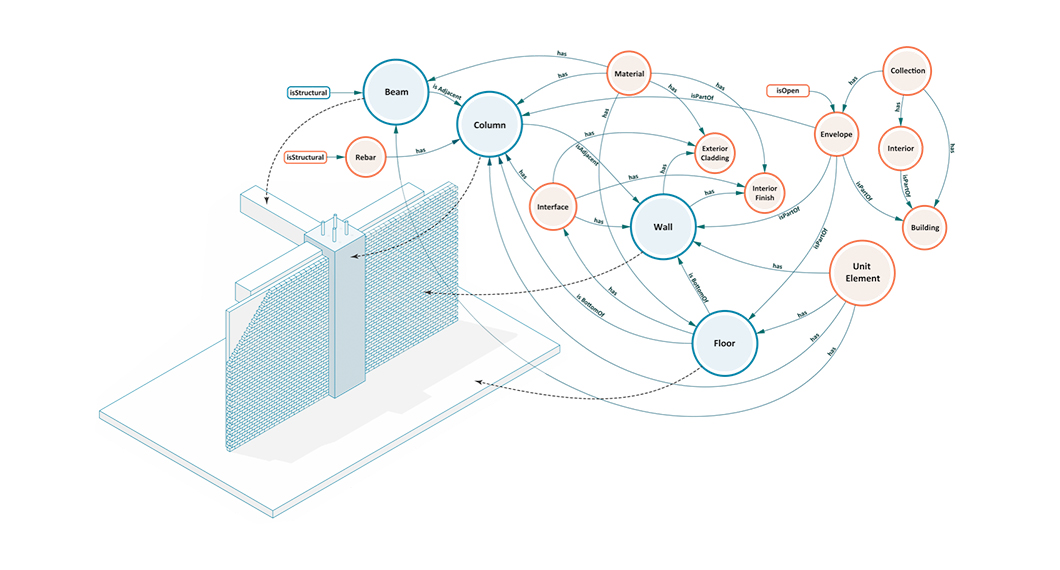

Buildings, Systems and Data

Designing a building or a city block is a process that involves many different players representing different professions working in concert to produce a design that is ultimately realized. This coalition of professions, widely referred to as AECO (Architecture, Engineering, Construction and Operation) or AEC industry, is a diverse industry with each element of the process representing a different means of approaching, understanding, and addressing the problem of building design.

Graph analytics with Spark

Stardog Spark connector, coming out of beta with 7.8.0 release of Stardog, exposes data stored in Stardog as a Spark Dataframe and provides means to run standard graph algorithms from GraphFrames library. The beta version was sufficient for POC examples, but when confronted with real world datasets, its performance turned out not quite up to our standards for a GA release. It quickly became obvious that the connector was not fully utilizing the distributed nature of Spark.

Try Stardog Free

Stardog is available for free for your academic and research projects! Get started today.

Download now