Knowledge Graphs for Data Science - Part 2

Data Mappings

In a previous post I showed how to explore some data using a graph paradigm, rather than the usual tabular arrangements used by relational databases, and Python’s Pandas dataframe. Running that analysis required importing CSV data files into Stardog. We need to tell Stardog what sort of graph structure we want for the data. Detailed documentation for this can be found in here and here. I’m going to run through the mappings I used to import the E.coli and weather data for that example. These mappings are contained in an sms file (Stardog Mapping Language), and are interpreted by Stardog-admin’s virtual import command.

Note that these mappings are not the only ones that could be used for these datasets, nor do they represent “best practices” for more complex situstions. They contain sufficient features for experimenting with small data sets.

Complete data for this example, and all of the mapping files, can be downloaded from Stardog’s example repo.

The E.coli data

"Beach ID" ,"Beach Name" ,"Sample Date" ,"Publish Date" ,"eColi Level" ,"Beach_Status" ,"Beach Advisory"

1 ,"Marie Curtis Park East Beach" ,"2007-06-03" ,"2007-06-04 13:39:01" ,45 ,"SAFE" ,"Beach safe. E.coli levels at or below 100 per 100mL of water."

2 ,"Sunnyside Beach" ,"2007-06-03" ,"2007-06-04 13:39:01" ,18 ,"SAFE" ,"Beach safe. E.coli levels at or below 100 per 100mL of water."

3 ,"Hanlan's Point Beach" ,"2007-06-03" ,"2007-06-04 13:39:01" ,10 ,"SAFE" ,"Beach safe. E.coli levels at or below 100 per 100mL of water."

...

About the data

There are several points to observe:

- The columns are separated by commas. Stardog can handle other delimiters, as explained below. Commas are the default.

- There is a header row. SMS will use the header values, exactly as spelled, to refer to their respective columns. SMS does not like names with spaces or dots ("."), so we will need to adjust for that.

The SMS mapping file

01: prefix : <http://stardog.com/>

02: prefix beach: <http://stardog.com/beaches/>

03: prefix tm: <http://stardog.com/time/>

04:

05:

06: MAPPING :Beaches

07: FROM CSV {

08:

09: }

10: TO {

11: ?beach_iri a beach:Beach;

12: rdfs:label ?beach_name .

13:

14: ?sample_iri a beach:Sample ;

15: beach:ecoli ?ecoli ;

16: beach:status ?Beach_Status .

17:

18: ?date_iri a tm:TimePoint ;

19: tm:byDay ?day ;

20: tm:byYear ?year .

21:

22: ?sample_iri beach:atBeach ?beach_iri .

23:

24: ?sample_iri tm:takenOn ?date_iri .

25: }

26: WHERE {

27: bind(template("http://stardog.com/beaches/m-{_ROW_NUMBER_}") AS ?sample_iri)

28: bind(template("http://stardog.com/beaches/b-{Beach ID}") as ?beach_iri)

29: bind(template("http://stardog.com/time/d-{Sample Date}") as ?date_iri)

30:

31:

32:

33: bind(xsd:date(sourceField("Sample Date")) as ?day)

34: bind(fn:year-from-date(?day) as ?year)

35:

36: bind(sourceField("Beach Name") as ?beach_name)

37:

38: bind(xsd:decimal(sourceField("eColi Level")) as ?ecoli)

39:

40:

41:

42: }

43:

Commentary by line number

Line 0 (Preliminaries). A mapping file contains 5 parts

- namespace definitiones

- Keyword

mappingand an optional mapping name - Keyword

fromand the structure of the source data,CSVin this case. The braces contain instructions for pulling data out of the source structure.SMSinfers this process forCSVfiles, and this section is left empty. - Keyword

to. Define the nodes and relationships we want in the destination knowledge graph - Keyword

where. Transform, filter and operate on the data, usingsparqlexpressions and some additionalSMSgoodies.

As you can see, SMS code resembles sparql and the turtle serialization of RDF graphs. Note that the keywords are not case sensitive.

Line 1 - 3: Prefixes allow me to abbreviate the IRI’s. For example, wherever you see, beach:, SMS inserts http://stardog.com/beaches/. The phony URL’s serve to create unique identifiers for the IRI nodes and relationships (edges). Since I work at Stardog, I include stardog.com in my own projects. Choose something that is meaningful to you.

Line 26: Let’s skip to the where block. In each of these lines, SMS is defining variables it will use in the to block.

Line 27: bind. bind is the sparql assignment function. bind(expression as ?var_name) creates a variable containing the results of expression. I could add the contents of two columns, take a logarithmic transformation, or implement a ternary operation (if true, then_this, else_that). Any legitimate sparql function can be used.

Line 27: template. This is SMS magic. In this line, I am creating IRI’s for each E.coli measurement entity. The prefix trick doesn’t work here, so I need to write out the URL piece. {_ROW_NUMBER_} is another SMS utility. This line will create an IRI for each row of the beaches_data.csv file. The first row will have IRI http://stardog.com/beaches/m-1. I can refer to these in the to block through ?sample_iri.

Line 28: {Beach ID} takes the values from column Beach ID and builds an IRI from it. Be sure to keep the same spelling and capitalization as in the CSV file. I can give ?beach_iri any name I want, except the exact column header name.

Line 33: Two things going on here.

- The header name

Sample Datecontains a space, whichSMSdoesn’t like. FunctionsourceFieldallows for header names with illegal characters. xsd:date.SMSimports everything as a string. Functionxsd:dateconverts to date format, so thatsparqlqueries will do the “right thing”, like sort by date or filter within a range.

Line 34: fn:year-from-date. Stardog includes a number of functions from public math ontologies. You can use these in mappings or queries. Note that I’m taking a variable defined in the previous bind line, ?day and using it to create a second variable.

Line 36: I need to use sourceField because of the space.

Line 37: SMS does not infer data types. I use another xsd datatype function to convert E.coli measurements to numeric.

Line 10: Now I’m ready to describe the graph and express some relationships.

Line 11-12: An IRI is created for each beach. A data node is created for the name of the beach. rdfs is a famous ontology for building up knowledge graphs. rdfs:label is used to relate IRI’s to appropriate names. I could have constructed my own relationship - say, something like beach:name, but where a public ontology exists, it is often best to use it. The rdfs namespace is loaded automatically by Stardog.

Line 16. Beach_Status is a legal variable name in SMS, so I didn’t need to use sourceField to import this column. SMS automatically associates that column in the CSV file to a variable with the same name: ?Beach_Status. Line 16 creates a relationship, beach:status, between the beach entity, and the data contained in column Beach_Status.

The weather data

03: prefix : <http://stardog.com/>

04: prefix weather: <http://stardog.com/weather/>

05: prefix tm: <http://stardog.com/time/>

06:

07:

08: MAPPING :Weather

09: FROM CSV {

10:

11: }

12: TO {

13: ?msnts a weather:Sample ;

14: weather:hasTemp ?temp ;

15: weather:hasHum ?humidity ;

16: weather:hasRain ?rain ;

17: weather:hasDateString ?hourString .

18:

19: ?date_iri a tm:TimePoint ;

20: tm:byDay ?day .

21:

22: ?msnts tm:takenOn ?date_iri .

23:

24:

25: }

26: WHERE {

27: bind(template("http://stardog.com/weather/w-{_ROW_NUMBER_}") as ?msnts)

28: bind(xsd:decimal(sourceField("Temp (°C)")) as ?temp)

29: bind(xsd:decimal(sourceField("Rel Hum (%)")) as ?humidity)

30: bind(concat(replace(sourceField("Date/Time (LST)"), " ", "T"), ":00") as ?hourString)

31: bind(if(contains(sourceField("Weather"), "Thunder") || contains(sourceField("Weather"), "Rain"), 1, 0) as ?rain)

32:

33:

34:

35: bind(iri(concat("http://stardog.com/time/d-", substr(?hourString, 0, 11))) as ?date_iri)

36:

37:

38: bind(xsd:date(substr(?hourString, 0, 11)) as ?day)

39:

40:

41:

42:

43: }

44:

The weather data has a simpler structure than the E.coli data. Basically, I want to create one IRI node for each row of the file, and relate that node to data from the humidity, temperature and precipitation fields. Rain is a bit tricky. The Weather column contains a string annotation about the weather in that hour: rain, snow, fog, etc. It is left blank if the weather is fine. Sometimes, two annotations are made. To further complicate matters, thunderstorms are marked “Thunder”, with no rain, although one never gets dry thunder in Toronto. I want to score each hour with 1 for rain and 0 otherwise.

Datetimes are taken hourly, so I need to connect each measurement to the day it was made, using the same temporal nodes that were created through the beach data.

Commentary

Line 30: Dates are stored in minutes, like “2011-06-01 11:00”. sparql datetime functions want format 2011-06-01T11:00:00. This line makes the conversion and stores the result in ?hourString.

Line 31: Some text manipulation, a conjunction and a ternary if. Sweet.

Line 35: Truncates ?hourString to a date string, concatenates with the same IRI base as for dates in the beaches portion of the graph. This will create a new node, if that date has not been encountered before. Otherwise, it will connect the weather data to the E.coli data through date.

Line 38: I also want to store the date as a literal with a datetime datatype.

Making the import

With a mapping file, I can import my CSV’s into Stardog. The database must already exist.

stardog-admin virtual import beachDB beach.sms beach_data.csv

stardog-admin virtual import beachDB weather.sms weather_data.csv

virtual-import has a number of optional parameters, including a properties file that allows for some formatting issues. The properties file is entered after the database in the virtual import statement.

stardog-admin virtual import beachDB beach.properties beach.sms beach_data.csv

For example, if my CSV was tab separated with no header, I would need to include the following lines in the properties file:

csv.separator=\t

csv.header=false

No header, no problem

When a CSV has a header, SMS uses the header values as referents for the import. In the absence of a header, columns are referred to by column number, starting at 0, thus: ?0, ?1, ?2 ...

Keep Reading:

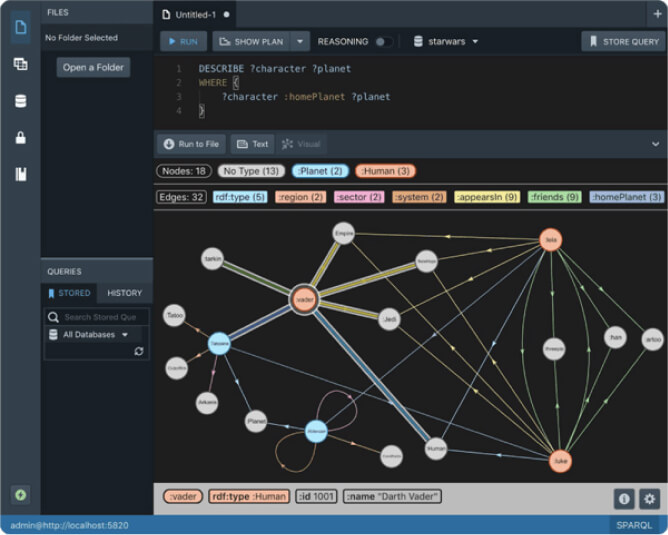

Unified Process Monitoring

Did you ever have a slow query, export or any other operation and wondered “Is this ever going to finish?!”. Many operations such as SPARQL queries, db backups etc have performance characteristics which may be hard to predict. Canceling rogue queries was always possible, but there was not yet a way to do so for other potentially expensive operations. If this ever affected you then the new unified process monitoring feature we added in Stardog 8.

Chaos Testing Stardog Cluster for Fun and Profit

At Stardog we work hard to build software that’s not only performant but also extremely robust. Stardog Cluster is a highly available, key component to ensuring Stardog remains up and running for our customers. However, every distributed system is susceptible to faults and bugs wreaking havoc on it. Most software testing checks the “happy path” through the code to verify it behaves as designed. Moving a step further, tests can check some obvious failure cases; for example, if one service in a deployment is terminated.

Try Stardog Free

Stardog is available for free for your academic and research projects! Get started today.

Download now