Activate Metadata as Part of your Semantic Layer with Stardog's Integration of Databricks Unity Catalog

Get the latest in your inbox

Get the latest in your inbox

Organizations across verticals and around the world continue to struggle with business processes for making effective use of the vast amounts of data available, through a diverse set of both internal systems and external sources, into actionable insight to drive business strategy. Data lives in potentially hundreds and thousands of diverse systems within the enterprise and resides in formats based primarily around relational database technologies or proprietary formats in data lakes, making it difficult to decipher and orient this data around specific use cases and strategic business objectives.

Even in the case of a platform where the intent is to consolidate data from across different silos, such as traditional Master Data Management or data warehouse architectures or data lakes, or more modern approaches such as the lakehouse, data from different domains is often isolated to the extent that it is difficult to integrate across domains to achieve key strategic objectives.

Deriving insight from enterprise data requires business context to triangulate and link data across complex systems and domains. The business process for creating the business context within an enterprise data ecosystem is frequently supported by introspecting enterprise data catalogs or metadata management tools to understand where data lives and whether it is fit for purpose as to the use case at hand. This data can be used as a foundation for building an effective semantic data layer that span domains and use cases and to power true data democratization.

The efforts to wrangle data from across an organization have been further enabled by the advancement of modern data architectures in support of analytics and AI. Leading innovators like Databricks are supporting cost-effective approaches to storage/compute layers and providing the tools for data scientists and data engineers to process and analyze data at scale, making processes and functions like AI and ML true business drivers.

While modern platforms and architectures have enabled a shift from ETL to ELT, reducing the need for maintaining expensive batch-focused pipelines working within the confines of traditional MDM or Data Warehousing systems, there is much to be done to fully realize the promise of data democratization.

Enabling non-technical users to self-serve and collaborate involves a lot more than simply hooking up a BI tool — this approach leaves a lot to be desired in terms of reducing latency, promoting collaboration and re-use with an easy-to-understand vocabulary, providing context by connecting data across domains, enabling self-serve through data exploration and enriching analytics by inferring new insights.

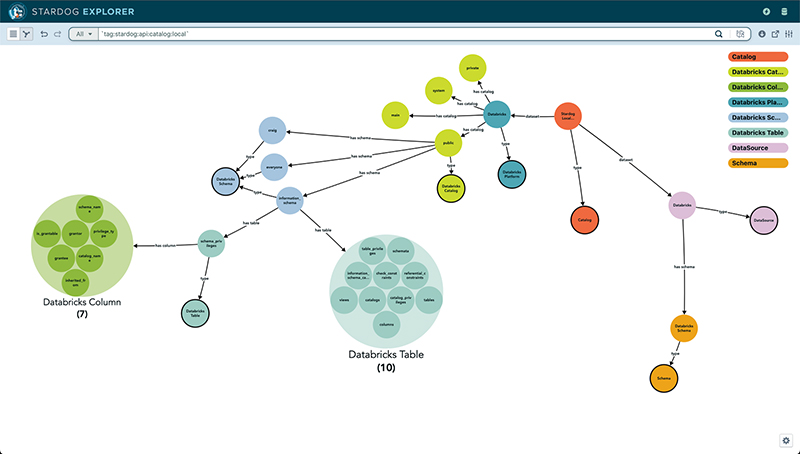

A semantic model provides the foundation for shared understanding across the organization to support a knowledge-first approach to data management and analytics. The semantic data layer can be represented through simple tools as familiar as a whiteboard. Building a semantic model can be done using Stardog’s Enterprise Platform through Designer.

Basic models with a few entities can serve as a starting point. Layers of business context may be added to answer additional questions. Mapping data from the Lakehouse into your model supports materializing or virtualizing data to enable an agile Data Fabric.

A data catalog provides context around the provenance and use of data across the enterprise and important dimensions of data quality and integrity such as uniqueness, completeness, and accuracy.

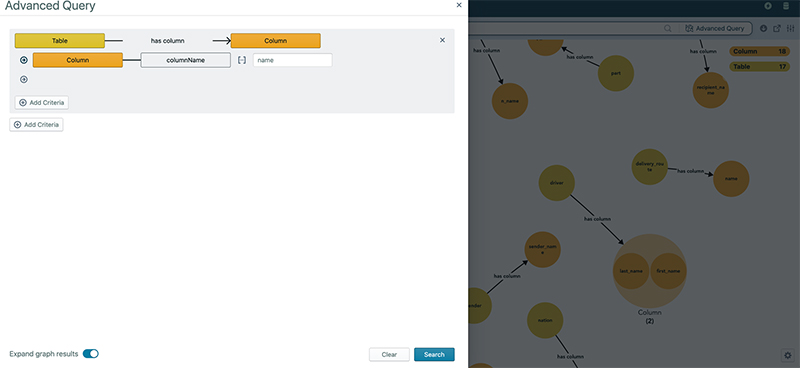

When focusing on the process of modeling a semantic data layer around a knowledge graph, it makes sense to start from the perspective of exploring available metadata that describes key domains, entities, and relationships needed to answer questions relevant to gaining insight and meeting objectives for compliance, analytics, and AI use cases. A data catalog serves as a good starting point in that journey, when in place.

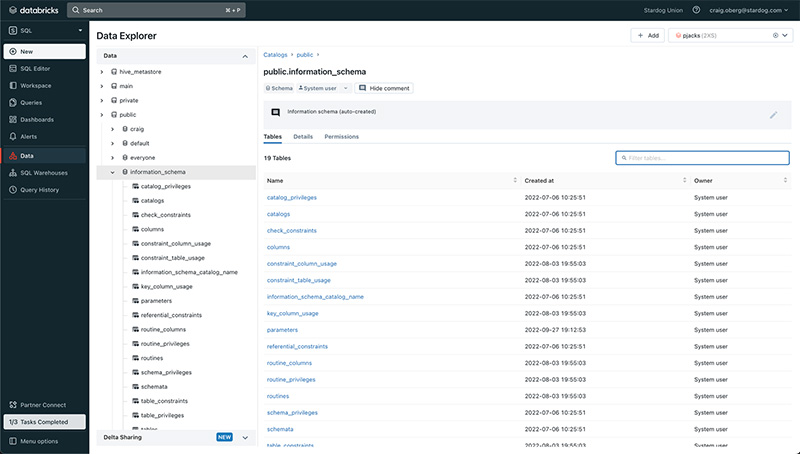

Unity Catalog from Databricks provides the foundation for context across and within the lakehouse. Unity Catalog is a unified governance solution for all data and AI assets including files, tables, and machine learning models in your lakehouse on any cloud. With a single interface based on ANSI SQL to manage access permissions, Unity Catalog helps data teams simplify data and AI governance. Data teams can define access policies once and enforce said policies across all workloads and workspaces. Unity Catalog also provides centralized fine-grained auditing by capturing an audit log of actions performed against the data and helps to meet compliance and audit requirements.

With automated and real-time data lineage across tables, columns, notebooks, workflows, and dashboards, business users can perform data quality checks, complete an impact analysis of data changes, and debug any errors in their data pipelines.

Our integration with Databricks Unity Catalog provides users with a common business vocabulary and access to their Unity Catalog metastore — catalog, schema, table, column resources — as part of the unified semantic layer.

Modern enterprises need to develop a Data Governance Framework. For effective governance, this is generally underpinned by a catalog megastore. Unity Catalog provides that megastore across the lakehouse enabling business users to:

Stardog is the first partner in the Partner Connect ecosystem that fully supports Unity Catalog.

Stardog is now available on Databricks Partner Connect. Read how to get started.

How to Overcome a Major Enterprise Liability and Unleash Massive Potential

Download for free