Stardog for Executives: Trusted AI for Confident Decisions

Get the latest in your inbox

Get the latest in your inbox

Executive decisions increasingly depend on AI-driven insights, forecasts, and recommendations. But as AI systems become more involved in decision-making, leaders face a new challenge – trusting not just the data, but the AI itself.

When AI lacks context, it produces confident answers that may be technically correct and strategically wrong. Ambiguous terms, shifting definitions, and disconnected systems create blind spots AI cannot resolve on its own. Without consistent definitions and governance, AI scales uncertainty rather than insight.

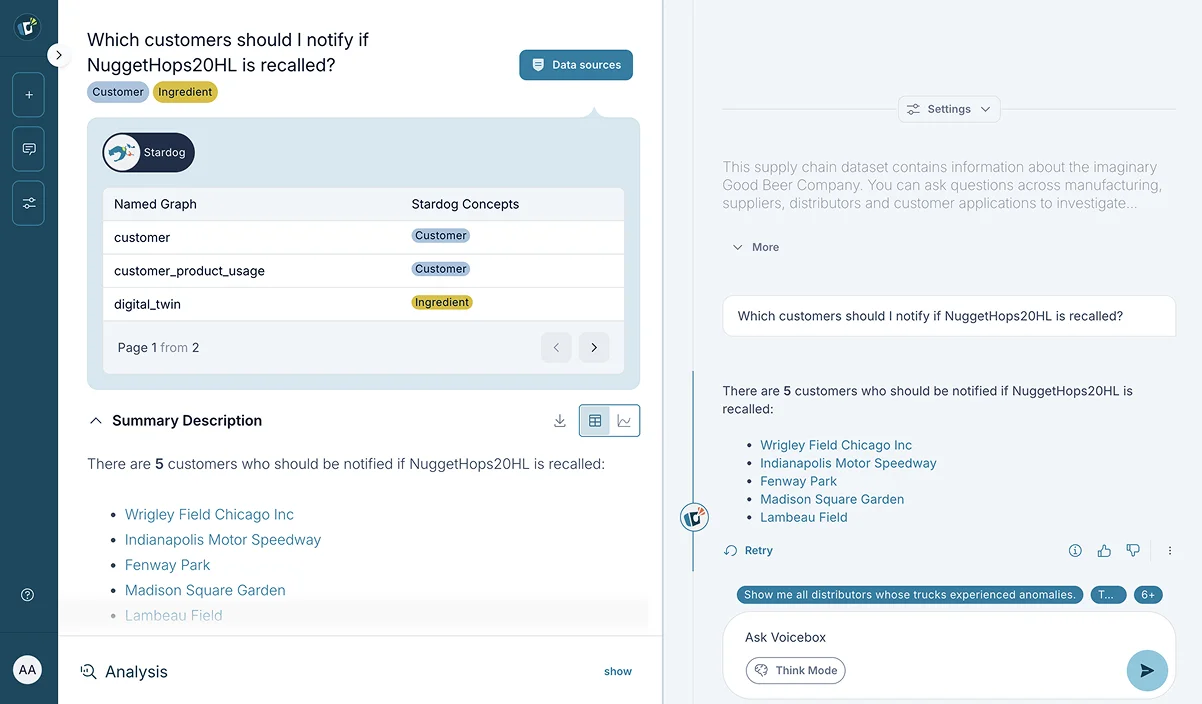

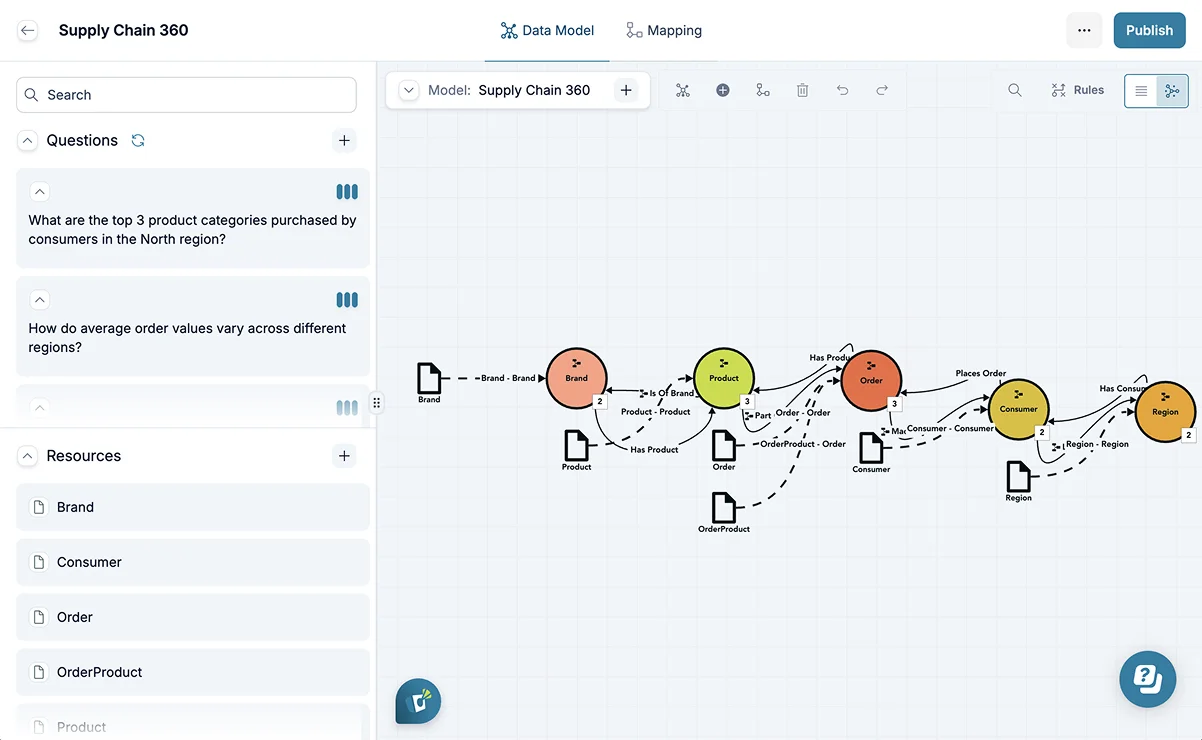

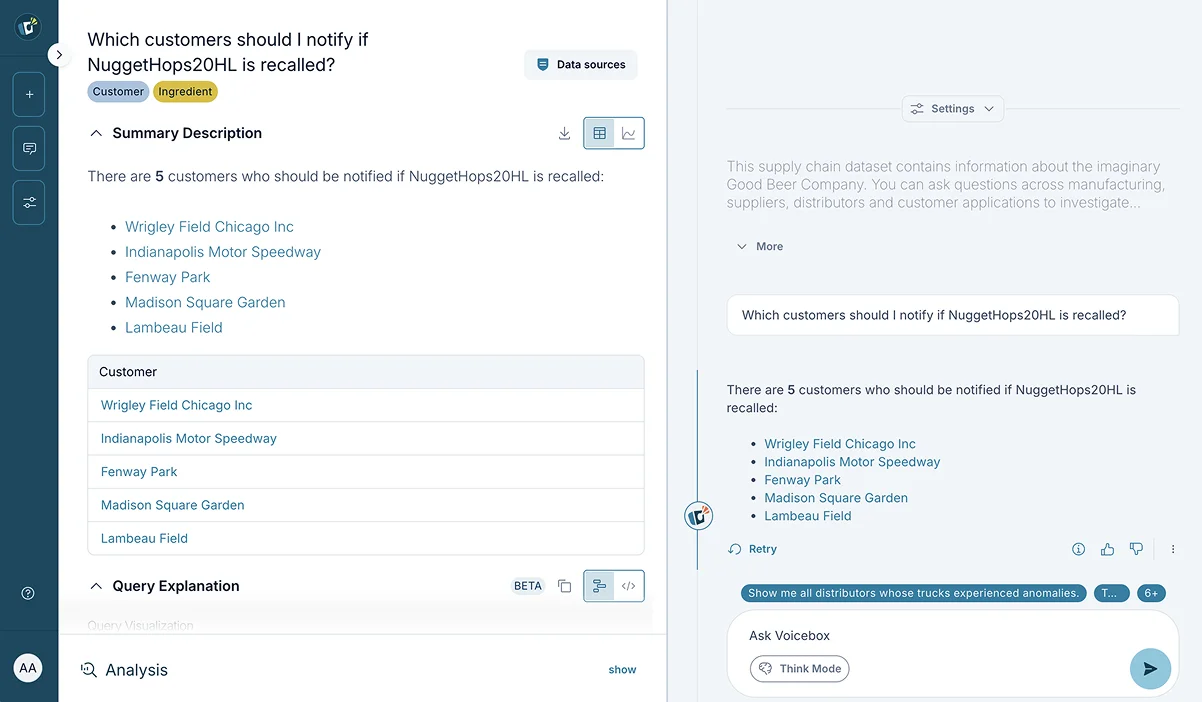

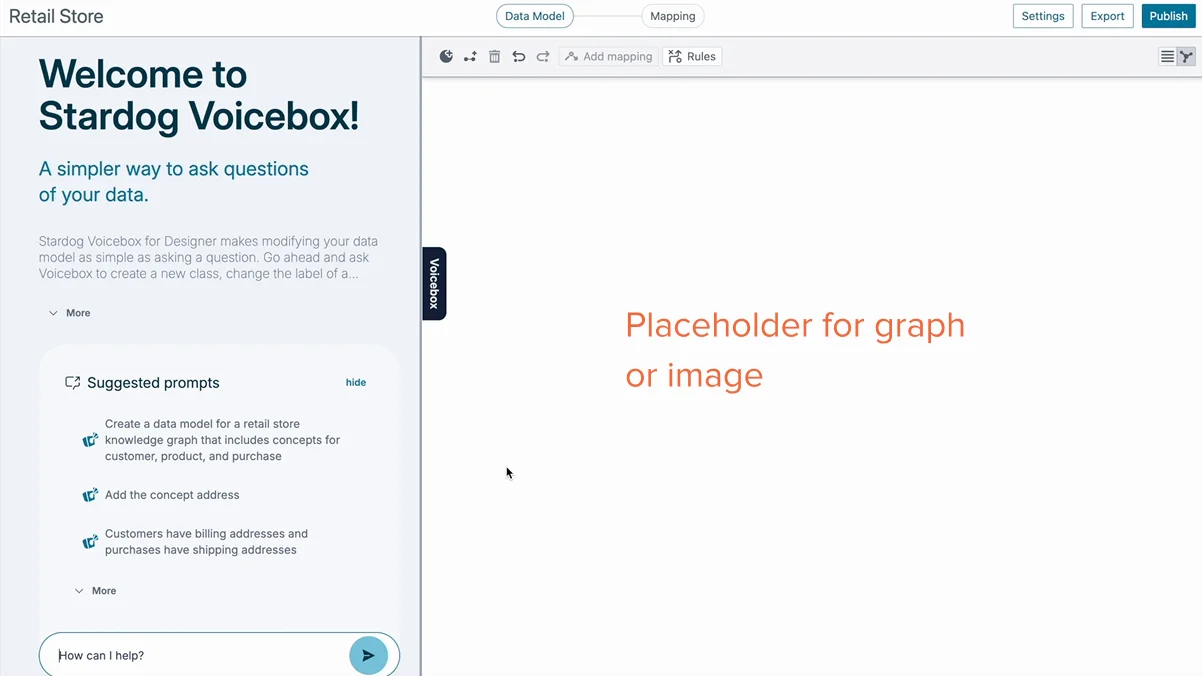

Stardog addresses this by providing a knowledge graph–powered semantic layer that unifies disparate enterprise data sources by business context and meaning, providing the semantics AI needs to deliver not just accuracy but also provide the traceability and guardrails needed for enterprise scale and compliance. The result is a control-tower view of the business, where leaders can rely on AI-driven insights without second-guessing the meaning behind them. Instead of reconciling answers across teams and tools, executives see a consistent, scalable view of the enterprise that holds up as AI use expands.

When AI lacks semantic context, executive decision-making stalls. The underlying challenge isn’t insufficient data, but rather that the same data means different things in different parts of the organization. Deploying AI in this fragmented environment doesn’t solve the problem. It magnifies and accelerates it.

Enterprises operate in environments built at different times, for different purposes, and with different assumptions about meaning. Even without AI, these disconnected systems produce conflicting answers, and human analysts struggle to reconcile them. AI does not create this problem; it accelerates it. By operating at machine speed across fragmented definitions and sources, AI scales ambiguity exponentially, delivering multiple answers to the same question with equal confidence and forcing executives to confront inconsistency faster, more broadly, and with greater risk than ever before.

Common business terms often carry multiple meanings, and humans instinctively recognize when clarification is needed. AI does not. When an AI agent encounters the word “Bear,” it cannot distinguish between the animal, the verb, or a company name without context. It selects a meaning and proceeds. In a business context, the same issue applies to terms like customer, revenue, or risk. Without shared semantic context, AI-generated insights may appear precise while reflecting the wrong interpretation, making them difficult for executives to trust.

AI systems do not reason about enterprise data from first principles. They apply patterns and assumptions learned from their training data, in much the same way they do with their enterprise data, projecting familiar structures and relationships based on similarity rather than intent. When enterprise definitions are implicit, inconsistent, or undocumented, AI fills the gaps by applying out-of-context background knowledge to business data and processes. The result is output that appears authoritative but reflects the wrong meaning, allowing ambiguity to move quickly into decisions unless semantic meaning and governance are made explicit.

In human-driven workflows, manual reconciliation acts as a safeguard against inconsistency and misinterpretation. In AI-driven workflows, that safeguard disappears. AI moves faster than people can validate assumptions, and by the time issues surface, decisions may already be in motion. Without shared semantic context in place upfront, organizations trade speed for control, which increases risk at the executive level.

AI can only be trusted when it operates within shared meaning and clear boundaries. Stardog’s knowledge graph–powered semantic layer gives AI systems the context they need to reason over enterprise data using governed definitions, relationships, and rules that reflect how your business actually operates.

Enterprise data breaks down when systems don’t understand how they relate to one another. Finance, operations, customer, and risk data live in different platforms, each reflecting different assumptions. Stardog uses an enterprise knowledge graph to connect this data where it already lives, capturing relationships across systems without forcing migration or replatforming.

These connections provide more than access. They give AI systems the structural context needed to reason across domains instead of pulling isolated facts. Executives gain a clearer line of sight across the business, and AI-driven insights reflect how information is actually connected, not just where it was sourced.

Connecting data does not resolve inconsistency if teams define the same concepts differently. The semantic layer captures how the organization understands key business terms like customer, revenue, exposure, or risk and applies those definitions consistently across systems. Instead of relying on implicit assumptions embedded in reports or models, context is made explicit and reusable.

This shared semantic context is what allows AI systems to operate reliably. AI agents no longer need to infer meaning from fragmented sources or conflicting logic. They reason over the same definitions executives use to make decisions, making AI-driven insights more consistent, explainable, and aligned with how the business actually operates.

Trust breaks down when it’s unclear how data can be used, by whom, and for what purpose. Governed access applies consistent rules at the semantic level, controlling how data is exposed across analytics, applications, and AI use cases. Instead of embedding logic in individual tools or models, policies live alongside shared meaning and relationships.

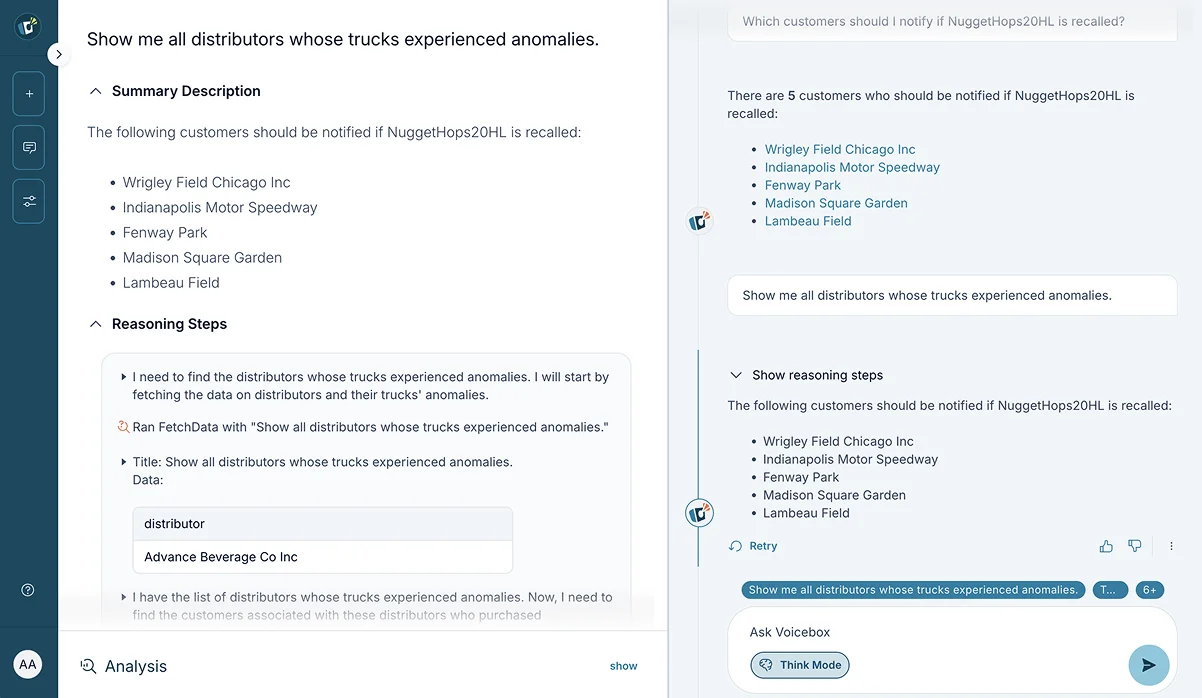

To enable trusted AI, these guardrails are essential. AI operates within approved definitions, access boundaries, and usage constraints, reducing the risk of unintended or unexplainable outcomes. Executives gain confidence not because AI is restricted, but because its reasoning is transparent, traceable, and aligned with how the business governs information.

CIOs are responsible for enabling innovation while maintaining control across an increasingly complex technology landscape. As AI adoption accelerates, CIOs must establish consistent context, shared meaning, and clear governance so AI-driven insights can be trusted and scaled across their business.

Business teams ask for answers. IT is asked to deliver them. But alignment breaks down when the same metric carries different meaning depending on who is asking or which system holds the data. “Customer lifetime value” means one thing in the sales dashboard, another in the finance forecast, and something else in the AI model predicting churn. Definitions scattered across spreadsheets, dashboards, and tribal knowledge force IT into endless cycles of clarification and reconciliation, slowing decisions and eroding trust in AI outputs.

Stardog anchors business definitions directly to the data through its semantic layer. Business terms are defined once and applied consistently across analytics, applications, and AI systems. IT no longer has to reinterpret metrics every time a new request comes in. Definitions are governed, reusable, and aligned with how the business actually operates.

CIOs don’t get to choose which systems the organization runs. They have to make all of them work together: legacy ERP, cloud CRM, on-premise data warehouses, SaaS applications. Each integration project brings custom code, brittle connectors, and technical debt that accumulates faster than teams can pay it down. When AI initiatives require data from multiple systems, the integration burden multiplies, and timelines stretch from weeks into months.

Stardog connects those systems without requiring migration or consolidation. The knowledge graph captures relationships across platforms where data already lives, providing AI with the context it needs to reason across systems without custom integration work. When systems change or new ones are added, the semantic layer adapts without breaking downstream analytics and AI applications.

As AI adoption expands, IT loses visibility into how AI systems are interpreting data, which definitions they’re applying, and whether they’re respecting access controls. AI agents built by different teams use different assumptions, creating a consistency nightmare where no one can explain why two AI systems give conflicting answers to the same question. Manual oversight doesn’t scale, and by the time issues surface, AI may have already made decisions based on wrong context or unauthorized data.

Stardog applies governance at the semantic level, establishing clear guardrails for how AI systems access and interpret enterprise data. Access rules, business definitions, and usage policies are applied consistently across all AI applications. When executives question an AI-generated insight, IT can trace exactly which data was used, how it was interpreted, and whether the system operated within approved boundaries, making AI adoption faster and safer.

CTOs and IT leaders are expected to modernize systems without disrupting what already works. As AI adoption accelerates, architectures continue to grow while tolerance for complexity shrinks. The challenge is no longer just scaling infrastructure, but establishing consistent context and clear boundaries so systems and AI can evolve without increasing risk.

Every new AI initiative adds another layer to an already complex stack. Logic gets embedded in pipelines, transformation scripts, API layers, and individual models. The same business rule exists in five different places, written in three different languages, maintained by two separate teams. When a definition needs to change, no one knows where it lives. When AI systems produce conflicting results, tracing the source becomes an archaeology project through scattered code and documentation.

Stardog centralizes business logic in a semantic layer, allowing systems and AI to reference consistent definitions and relationships instead of duplicating rules everywhere. When business meaning changes, it changes once. AI systems and analytics automatically inherit the update without requiring teams to hunt down and modify code across the architecture.

Core systems that run the business are too critical to replace and too outdated to extend easily. CTOs need to make decades-old platforms accessible to modern analytics and AI without the risk and cost of full migration. Traditional approaches mean building custom APIs, maintaining brittle middleware, or copying data into yet another system, each option adding technical debt and points of failure.

Stardog connects legacy and modern systems through a knowledge graph that captures relationships across platforms without requiring data movement. Legacy systems stay in place while AI and analytics gain access to their data through a semantic layer. When new systems are added or old ones retired, the semantic layer adapts without breaking everything downstream.

AI that works in a pilot often fails in production. Models trained on one definition of “customer” break when applied to data using a different definition. AI agents built by different teams can’t work together because they don’t share context. As AI use cases multiply, inconsistencies compound, creating a sprawl of fragile implementations that CTOs can’t trust to scale without constant intervention and troubleshooting.

Stardog provides a shared semantic foundation that AI systems build on from the start. Models and agents reference the same definitions, relationships, and context, reducing unexpected behavior as new use cases are added. CTOs gain confidence that growth in AI capability doesn’t come at the expense of stability or create a maintenance burden that scales faster than the value delivered.

CDOs are accountable for data trust across the organization. As AI becomes more embedded in analytics and decision-making, that responsibility extends beyond data quality to establishing semantic clarity, transparency, and context that AI systems can rely on at scale.

The CDO’s nightmare is simple: ask three departments for “total revenue” and get three different numbers, each defensible within its own context. Sales counts bookings. Finance counts recognized revenue. Operations counts something else entirely. Every team built their own logic, documented it their own way, and now AI systems inherit this fragmentation. When an AI model trains on sales data but gets applied to finance data, it produces confident answers based on incompatible definitions. The CDO becomes the referee in endless debates about whose version is “correct” instead of building a foundation the entire organization can trust.

Stardog uses an enterprise knowledge graph to make business meaning explicit by capturing how key concepts relate across the organization. Instead of debating definitions after AI produces conflicting results, the CDO establishes shared semantic context upfront. Analytics and AI operate with consistent meaning, eliminating the reconciliation cycles that slow decision-making and erode trust in data-driven insights.

An AI system flags a customer as high risk. The business asks why. The data team investigates and finds the answer buried across six systems, three transformation scripts, and assumptions made by a data scientist who left the company two years ago. By the time anyone understands what happened, trust in the AI system is gone. Without clear lineage and transparent logic, CDOs can’t explain AI outputs, can’t reproduce results, and can’t defend decisions when regulators or executives demand answers.

Stardog preserves lineage, relationships, and applied logic within its semantic layer. When an AI system produces an unexpected result, CDOs can trace insights back to their sources and understand exactly how meaning was applied. This transparency supports explainable analytics and AI outcomes, turning “the AI said so” into a defensible chain of reasoning that stakeholders can trust, then verify.

Self-service analytics promises speed, but it delivers chaos. Business analysts build their own dashboards using their own interpretations of what “customer churn” or “product margin” means. Each new report adds another definition to the pile. When AI tools amplify this self-service approach, the semantic drift accelerates. The CDO faces an impossible choice: lock down access and slow the business, or allow freedom and watch data meaning fragment beyond repair.

Stardog governs self-service at the semantic level, allowing teams to explore data freely while relying on consistent definitions, relationships, and constraints. Business users get the speed and flexibility they need. The CDO gets consistent definitions and usage patterns they can actually govern. Analytics and AI adoption scales without sacrificing the semantic consistency that makes insights trustworthy.

Data and AI leaders are accountable for turning insight into action that the business can trust. As AI moves from experimentation into production, success depends on grounding models and analytics in shared meaning, clear context, and governance that scales across use cases.

The demo works perfectly. Stakeholders are impressed. Then the AI system goes live and immediately produces results the business can’t use. It classifies customers differently than the sales team expects. It calculates risk using assumptions finance doesn’t recognize. It flags issues operations has never seen flagged that way before. The problem isn’t the algorithm. The problem is that the pilot data had one set of definitions and production systems had a dozen others. Data and AI teams spend months debugging issues that trace back to semantic inconsistencies no one documented during development. Each new AI initiative repeats this cycle, burning budget and credibility.

Stardog grounds AI in shared meaning and relationships from the start. Models train and operate using the same governed definitions, reducing the gap between pilot performance and production reality. When AI systems move from development to deployment, they carry forward the semantic context that makes them work reliably, allowing CDAOs and CDAIOs to scale AI initiatives without constant firefighting.

Analytics teams produce technically accurate reports that executives immediately question. Revenue numbers don’t match what finance reported. Customer counts conflict with what sales is tracking. The analysis is correct based on the data used, but the data doesn’t reflect how the business actually defines these concepts. AI amplifies this problem by generating insights at scale, all of them technically valid and strategically misleading. Data and AI leaders spend more time defending their work than delivering new value.

Stardog connects metrics, entities, and relationships within the same semantic layer that executives use to understand the business. Analytics and AI outputs reflect not just what happened in the data, but what it means in business context. Results align with how leaders think about the organization, making insights easier to trust and act on without endless reconciliation.

An AI system recommends a major strategic shift. Executives want to understand why before committing resources. The data team can explain the algorithm but can’t clearly trace how business context influenced the output. Which customer segments were included? What definition of “high value” did the model use? How did it weigh different risk factors? Without clear answers, the recommendation stalls. AI becomes a black box that leadership doesn’t trust enough to follow, no matter how sophisticated the underlying technology.

Stardog makes AI outputs explainable by preserving the semantic context used throughout the analysis. When executives ask how AI reached a conclusion, data and AI teams can show exactly which definitions were applied, which relationships mattered, and how business rules influenced the result. This transparency transforms AI from an opaque tool into a trusted advisor that leadership is willing to act on.

Financial decisions depend on understanding what numbers represent and how they connect to the rest of the business. As analytics and AI play a larger role in financial planning and reporting, CFOs need confidence in how numbers are defined and derived across the organization.

Finance reports revenue down 8%. Operations reports volume up 12%. Both are correct based on their own data, but CFOs can’t make decisions when the numbers don’t tell a coherent story. Revenue doesn’t exist in isolation. It’s connected to product mix, customer behavior, pricing changes, and operational capacity. When financial systems are disconnected from the operational data that drives performance, AI-generated forecasts and analyses miss critical context. CFOs get technically accurate numbers that fail to explain what’s actually happening in the business, forcing manual investigation every time results seem off.

Stardog connects financial and operational data through a semantic layer, allowing AI to reason across both using consistent assumptions. Revenue analysis automatically incorporates the product, customer, and operational context that explains performance. CFOs gain AI-assisted insights grounded in how the business actually operates, not just what the financial system recorded.

The board challenges a forecast. Auditors question a variance. The CFO needs to explain how the numbers were calculated and where they came from. But the analysis involved data from six systems, transformations done by three different teams, and assumptions made in spreadsheets no one documented. Tracing the logic takes days. By the time finance reconstructs the calculation path, confidence in the result has already eroded. Without clear lineage, every AI-assisted analysis becomes a liability instead of an asset.

Stardog preserves relationships, lineage, and applied assumptions within its semantic layer. When someone questions a number, finance can trace it back to its sources and show exactly how it was calculated. This gives CFOs a defensible audit trail for AI-assisted insights, turning “the system calculated it” into a transparent chain of logic that auditors and executives can verify.

Market conditions shift. New regulations take effect. Competitors make unexpected moves. The CFO needs updated scenarios immediately, but the request goes to a backlogged data team that needs two weeks to rebuild models with new assumptions. By the time the analysis is ready, the window for action has closed. When financial logic is scattered across systems and pipelines, even small changes require extensive rework before finance can see the impact.

Stardog applies shared meaning and connected context upfront, allowing finance teams to adjust assumptions and see impact quickly. Business rules and relationships are already established. Scenario modeling doesn’t require rebuilding data pipelines or waiting for IT. CFOs can respond to change with confidence because the semantic foundation supports rapid analysis without sacrificing accuracy.

Risk rarely appears in a single system or at a single moment. As organizations rely more on analytics and AI to surface issues early, CROs need confidence that risk signals are complete, consistent, and defensible across the enterprise.

A trading system flags unusual activity. Compliance shows the counterparty passed all checks. Operations reports the transaction fits normal patterns. Each system tells part of the story, but no one sees how the pieces connect until after the problem becomes visible to regulators. Risk indicators live in separate platforms built for different purposes, and CROs have no way to view exposure across the full context where it actually exists. By the time someone manually connects the dots, the window for intervention has closed. AI makes this worse by analyzing each silo independently, missing the cross-system patterns that signal real risk.

Stardog connects risk signals across systems so indicators can be viewed alongside the operations that generate them and the controls designed to manage them. AI doesn’t just analyze data in isolation; it reasons over relationships that span trading, compliance, operations, and finance. CROs gain a complete view of exposure without waiting for someone to manually piece together information after an issue has already surfaced.

Regulators want to know how risk decisions were made, not just what the final decision was. A loan was denied. An alert was escalated. A position was unwound. The CRO needs to show which controls applied, what data informed the decision, and why the conclusion was justified. But the logic is buried across risk engines, compliance workflows, and operational systems that don’t share context. Reconstructing the decision path requires forensic analysis of logs, scripts, and tribal knowledge. Documentation either doesn’t exist or doesn’t match what actually happened.

Stardog preserves relationships and decision paths across risk, compliance, and operational systems. When regulators ask how a decision was reached, CROs can demonstrate which controls were applied, what data was considered, and how rules were interpreted. This transparency reduces the effort required to respond to audits and regulatory reviews while giving CROs confidence that AI-assisted risk decisions can be defended under scrutiny.

Unrestricted data access creates compliance nightmares. Locking everything down creates operational gridlock. Risk teams that can’t see customer data miss early warning signs. Sales teams blocked from risk scores can’t make informed decisions. CROs face impossible tradeoffs between visibility and control, often discovering exposure only after someone accessed data they shouldn’t have or made decisions without context they needed. As AI adoption expands, these access issues multiply because AI systems need broad data access to be effective but create new exposure if that access isn’t properly governed.

Stardog governs access at a centralized semantic layer, applying consistent rules across risk, compliance, and operational systems. Risk teams get the visibility they need to spot issues early. Business teams get access to the context they need to operate effectively. AI systems work within clear boundaries that limit exposure without creating blind spots. CROs reduce enterprise risk while maintaining the data flows that keep the organization moving.

When AI operates on connected information governed by consistent assumptions, executive decision-making fundamentally shifts. Leaders stop debating whose numbers are correct and start focusing on what actions to take. Answers remain consistent across teams, tools, and moments in time, creating alignment instead of friction and momentum instead of delay.

This is what trusted AI looks like in practice. Insights are not just fast, they are dependable, defensible, and ready for action. Executives move forward with confidence because AI-driven recommendations are grounded in a foundation they can rely on, turning complexity into clarity and decisions into outcomes.

Executives choose Stardog to make trusted AI possible in complex enterprise environments. Stardog connects data where it lives and governs how it is used across analytics, applications, and AI, giving AI systems the structure and boundaries they need to operate reliably at scale.

Rather than debating inputs or rebuilding confidence with every decision, leaders gain a durable foundation for AI-driven decision-making. Stardog provides the control, consistency, and oversight required to deploy AI with confidence as it becomes part of everyday executive workflows.

How to Overcome a Major Enterprise Liability and Unleash Massive Potential

Download for free